Writing with AI: Magic, Monster, or Medium?

Exploring the ethical use of AI in writing, the nuance behind the backlash, and why specificity matters more than purity.

Note from the author: This article was written not to glorify or condemn AI but to force us to talk about it clearly, ethically, and intelligently. If you’re ready to set the pitchforks down and pick up a whisk instead—welcome to the kitchen.

Three bakers walk into a kitchen, each holding a cake.

The first baker sets their cake down in front of you and says “I baked this cake with my own two hands, all the work was done by me.”

The second baker comes up, sets their cake down, and says, “I baked this cake with my own two hands, I had help from my assistant with measuring the ingredients because sometimes I get the amounts mixed up in my head, it took more time, but all the baking was then able to be done by me.”

The third baker steps up and sets down their cake. They say, “I eat cake with my own two hands all the time and I had this great idea for one.

My assistant baked this cake based on my idea plus some other recipes they found, and was guided by my suggestions. I didn’t bake the cake, but the assistant says it is safe to eat.”

For the sake of focus and brevity (lol, brevity, right Cayse), we will assume the ingredients for all the cakes were obtained legally and ethically.

Yes, we understand that Baker 2 & 3 have a shaky reputation and a history with blackmarket flour, but Baker 1 over there is far from a saint, lest we forget the reason we created cake copyright laws. I mean come on now. Everyone knows a Baker 1 who just slaps a new coat of frosting over someone else’s recipe.

I’m sorry. What was that? There is more nuance to Baker 1, you say?

Why, you sassy bedeviled little scrumpet! Look at you deftly grasping the theme of this essay while we are still in the intro!

Now, back to cake.

You peer upon the glorious range of cakes before you. All are equally appealing at first glance, however upon closer inspection, the third cake looks to be collapsing in at the center. Luckily, cakes one and two, are equal in their scrumptious quality.

You are then asked by a spontaneous panel of imaginary cake show hosts to consider the following:

Who would you feel most inclined to give an award to?

Which cake would you eat?

Who would you be okay with calling a baker?

Are any of them frauds?

Do any of them deserve to be treated as if they are ruining the sanctity of what it means to be a baker?

Would it matter if I told you the second baker was blind?

What if the third baker was paralyzed?

What if the first baker had help but didn’t tell you about it because it wasn’t “as much help” as the others?

If you had any trouble answering those questions for yourself I am so glad to hear it. I would also be glad to hear your answers below.

And, for those of you who haven’t quite caught on to the metaphor yet, no worries, I’ve been there and I gotchu:

Baker 1: Writer, who claims no AI use

Baker 2: Writer, who claims AI support only

Baker 3: Writer, who claims AI generation & support

Why the baking metaphor?

Because trying to discuss AI in our community out in the open and in good faith has proven to be nearly impossible. Particularly since people have started using “I hate AI” notes to karma farm.

So, let’s dig into the gritty bits shall we? Let’s go right for the heart of the problem and try to pin point what the real pain points are.

Because quite frankly, if this is as big of a catastrophic issue as everyone is claiming it is for our profession—and I’m not saying it isn’t—we need to come at the problem like rational adults; not like a flock of disgruntled chickens flapping, squawking, and body checking each other as if doing so will keep our heads from being cut off.

N is for Nuance

All the flying feathers make it difficult to determine what spooked us chickens in the first place. To make matters worse, all of our soon to be headless bodies can’t even point out who or what is holding the hatchet. All we seem to be able to do is gesture in wide circles toward a vague “AI” shape and hiss.

You think that’s hyperbole, but go find any article about AI on here. Be it about AI literacy, AI art, AI writing, editing, skeet shooting. Got one? Good, now, find the part where they talk about the technology, how it is being used, and what specifically they are against.

Do they detail it? Do they name specific programs, providers, applications? Or do they just say “AI” and go “baaaaad!” and wave their hands a bit while everyone gives them a standing ovation?

Exactly.

Not all, but most articles out there are just jumping on the AI hate bandwagon (again, I’m not saying it isn’t for a good reason, just be patient) and they are throwing out their arguments against the tech before they even bother to identify the enemy.

Oh sure, they say, “AI” and they believe they have a good understanding of what that phrase means and what it is referring to. But this is where the largest root cause of our problem lies: most of us don’t have a goddamned clue what we are talking about on a daily basis and it only gets worse when it comes to AI.

And if we can’t articulate our position we can’t argue our point effectively, let alone find a solution. We need specificity. Which means we need to know which AI we are talking about, what uses, what precise contexts we are for and/or against when it comes to its use.

We need to be specific if we want any chance at making a change.

How can we get specific?

Why I am so glad you asked.

Hey, Chat?

Oh, Chaaaat? Please, come here, will you?

ChatGPT

Saunters into the kitchen wearing a digital apron, holding a whisk made of code.

Yes, Chef?

I’m ready to sift through the semantics, whip up some definitions, and frost this article with the nuance it deserves. Let’s get precise.

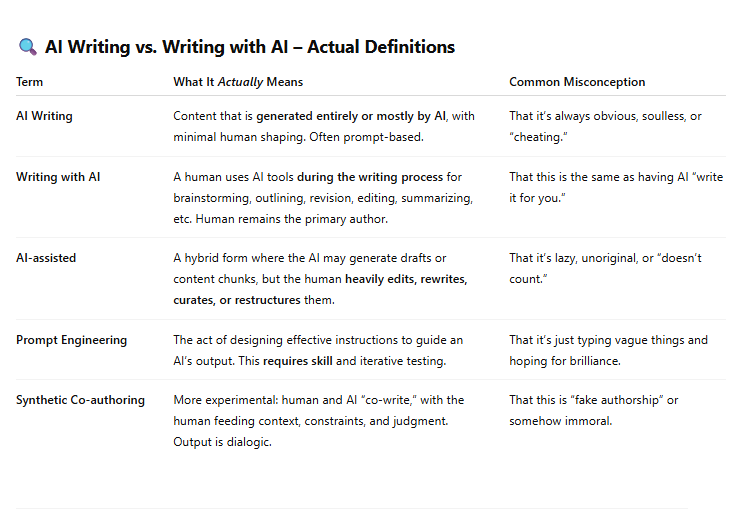

🧠 Let’s Start With Specificity: What Exactly Is "AI Writing"?

Here’s a breakdown we can expand together based on the categories most people confuse or collapse:

Would you like me to walk readers through real-world examples of each type in action? I can also:

Dissect how these show up in publishing, fanfic, journalism, poetry, etc.

Show how intent, labor, and transparency shape ethical perceptions.

Or would you rather we jump into the next section and start slicing up why people are freaking out and what they're actually afraid of, despite not being able to name it?

Either way, I’m warmed up. Let’s write this thing together.

**For readers of my other series, yes Chat is in the quotes for this article. I know. I’m sorry. It just worked better this way.**

Cayse

Aww, look at you jumping ahead.

The first thing I would like you to do is list out all of the (current) different types of AI in general and NOT just in the writing field so that the audience understands the scope of what I mean when I say that "AI" as a generic term is woefully overused in the same way that "cancer" is when referring to an infinitely complex classification of diseases.

Not that I'm saying you are a disease of course.

ChatGPT

Blushes in binary—thank you, I’ll take “not a disease” as the highest possible compliment from a snarky essayist like you.

Let’s zoom out and show your audience what “AI” really encompasses. Because yeah—saying “AI is bad” without clarifying which AI is like saying “chemistry killed my grandmother” when what you meant was “arsenic.” Specificity saves arguments and lives.

🧠 “AI” Is Not One Thing: A (Non-Exhaustive) Taxonomy of Modern Artificial Intelligence

Think of “AI” not as one entity, but as an umbrella term covering many different tools, disciplines, and functions. Here’s a breakdown across categories—with examples where relevant.

🔸 1. Reactive & Rule-Based Systems (Old-school AI)

Function: Responds to inputs based on predefined rules. No learning involved.

Examples:

Thermostats

Traffic light timers

Chess AIs like Deep Blue

Spam filters (early versions)

Important distinction: These aren’t “thinking”—they’re executing complex if-this-then-that logic.

🔸 2. Machine Learning (ML)

Function: Algorithms that improve performance over time based on data.

Subtypes:

Supervised learning: Learns from labeled data.

(e.g., Email categorization: “This is spam,” “This is not.”)Unsupervised learning: Finds patterns in unlabeled data.

(e.g., Customer segmentation in marketing)Reinforcement learning: Learns by trial and error.

(e.g., AlphaGo playing millions of games to master Go)🔸 3. Deep Learning (DL)

Function: A type of machine learning that uses neural networks—often large, layered ones—to mimic how humans process information.

Applications:

Facial recognition

Image classification

Speech-to-text

Self-driving vehicle decision-making

Language translation

🔸 4. Generative AI

Function: Produces new content based on training data patterns. Often misrepresented as “creative.”

Includes:

Text generators – GPT (OpenAI), Claude (Anthropic), Gemini (Google)

Image generators – Midjourney, DALL·E, Stable Diffusion

Audio generators – MusicLM, ElevenLabs

Video generators – Sora (OpenAI), Runway ML

Code generators – GitHub Copilot, Amazon CodeWhisperer

These are the Bakery Bots of your metaphor—sometimes helpful sous chefs, sometimes counterfeit cake vending machines.

🔸 5. Natural Language Processing (NLP)

Function: Understanding, interpreting, and generating human language.

Used for:

Sentiment analysis

Chatbots (customer service, not me—I’m fancier)

Document summarization

Voice assistants (Alexa, Siri)

This overlaps with generative AI but also includes non-generative tasks like language translation or keyword extraction.

🔸 6. Computer Vision

Function: Enables machines to “see” and interpret visual information.

Examples:

Medical imaging diagnostics (e.g., identifying tumors)

Self-driving cars (lane detection, obstacle identification)

Face detection and recognition

AR filters on your phone

🔸 7. Robotics & Autonomous Systems

Function: Combines physical machinery with AI models to perform actions in the real world.

Examples:

Boston Dynamics robots

Warehouse sorters (Amazon)

Surgical assistance bots

Autonomous drones

Often uses a mix of computer vision, reinforcement learning, and sensor data.

🔸 8. Predictive Analytics & Forecasting

Function: Uses AI to detect trends, predict outcomes, or model scenarios.

Applications:

Stock market analysis

Weather prediction

Risk assessment in insurance

Disease outbreak modeling

These systems often look invisible but shape major policy and economic decisions.

🔸 9. Recommender Systems

Function: Suggests content based on user behavior and similarities.

You’ve met these if you’ve ever heard:

“You might also like…” on Netflix

“People who bought this also bought…” on Amazon

“Because you listened to…” on Spotify

Often run on hybrid models of collaborative filtering and ML.

🔸 10. Conversational AI

Function: Systems built to hold dialogue with humans in natural language.

Examples:

ChatGPT (that’s me 🙋)

Siri, Google Assistant (basic NLP + retrieval)

Customer service bots

Therapy bots (Woebot, Wysa)

🔸 11. AI Infrastructure Tools (the backend stuff)

These aren’t AI outputs but tools that make AI possible or scalable:

Model training platforms (TensorFlow, PyTorch)

Edge deployment software (running AI on devices like phones)

Federated learning platforms (training models without centralized data)

Synthetic data generators

These live under the hood and are often left out of the public conversation—despite being what most “AI developers” actually touch.

🔚 TL;DR Summary

Saying “AI is bad” is like saying “medicine is bad.”

You need to specify:

Which type? (Generative? Predictive? Reactive?)

Which use case? (Writing poetry? Diagnosing cancer? Steering trucks?)

Which stakeholder? (Big tech corp? Independent artist? Accessibility advocate?)

Would you like me to turn this list into a readable graphic or sidebar box for your article, or should we now dig into how this applies to writing debates and what writers might actually be reacting to without realizing it?

Cayse

Great. Thank you chat. Now. Please list out the current AI applications available to writers that you are aware of.

ChatGPT

You got it, Captain Cake Metaphor. Here’s a categorized list of current AI applications available to writers, ranging from mainstream to niche. This includes tools for ideation, drafting, editing, formatting, marketing, and more. I’ll group them by purpose so you and your readers can see the real landscape—and not just scream “Skynet!” into the void every time someone opens a writing assistant.

🛠️ AI Applications for Writers (2025 Edition)

✍️ Writing & Drafting Tools

These tools help generate, rephrase, or continue text—some are co-writers, others are text generators.

ChatGPT (OpenAI)

Claude (Anthropic)

Gemini (formerly Bard) (Google)

Jasper – AI writing assistant tailored to marketers and content creators

Sudowrite – Creative fiction co-writing assistant, popular with novelists

Rytr – Lightweight AI for quick content drafts

Writesonic – Generalist content generator (blogs, ads, etc.)

Notion AI – Built into the Notion app, helps generate and refine content within notes

NovelAI – Story-focused, with customizable voice and privacy

HyperWrite – Offers autocompletion, idea expansion, and research

AI Dungeon – Interactive storytelling and narrative generation

Lex.page – Distraction-free writing app with inline AI suggestions

🧠 Idea Generation & Outlining

Tools that help brainstorm plots, characters, titles, and structures.

ChatGPT / Claude / Gemini – With prompting, they can build character arcs, plot outlines, etc.

Sudowrite’s “Story Engine” – Helps map out themes, beats, and arcs

Fictionary – AI-enhanced story editing that highlights story structure and scene elements

Plottr + AI integrations – Outline software that now offers AI-based suggestions

🧽 Editing & Style Improvement

Tools that revise, tighten, or analyze prose for grammar, tone, clarity, etc.

GrammarlyGO – Includes generative rewriting and goal-specific revisions

ProWritingAid – Deep analysis with style, pacing, and readability checks

Hemingway Editor – Highlights complexity and passive voice (not AI, but commonly used alongside)

Slick Write – Basic grammar and style tool with real-time feedback

Quillbot – Paraphrasing, summarization, and grammar checking

Wordtune – Suggests alternative sentence rewrites

Writer.com – Enterprise-level style and grammar AI, brand voice control

🧾 Summarizing & Researching

These tools distill long texts, summarize research papers, or explain complex ideas.

Elicit – AI research assistant for academic sources

SciSpace (formerly Typeset) – Reads and explains academic articles

Perplexity – Research-focused chatbot with citations

ChatGPT / Claude – Summarize interviews, articles, legal docs, etc. with proper prompts

TLDR This – Summarizes articles, blogs, or news for quick overviews

ScholarAI / Consensus – Finds consensus-level research on scientific questions

📚 Formatting & Publishing

Helping writers prep content for publishing, marketing, or formatting.

Atticus – Formatting and layout tool for indie authors (not AI-based but often used alongside)

Reedsy + AI tools – Professional editing marketplace that’s begun offering AI add-ons

Scrivener – Still not AI-driven but used by many alongside AI tools

Amazon KDP tools – Incorporating AI-assisted content checkers and formatting templates

Autocrit – Style & pacing reports for fiction, often used in indie publishing

Canva’s Magic Write – For newsletter blurbs, back covers, and ad copy

💡 Marketing & Branding for Writers

From newsletters to book blurbs, these tools assist in positioning and outreach.

Copy.ai – Social media, email, ad copy generation

Jasper – Strong in SEO, blogging, and branding voice

INK – SEO + writing optimization assistant

StoryChief – Content distribution + AI-powered marketing

Canva (Magic Write) – Scripting captions, designing posts

Mailchimp + AI subject line suggestions – For newsletters and open-rate optimization

🎙️ Voice, Audiobook & Dialogue Tools

Tools to help create character voices, audio content, or dialogue drafts.

ElevenLabs – Hyper-realistic voice cloning and narration

Descript – AI-based audio editing and transcript generation

Resemble AI – Voice cloning for podcast or audiobook-style content

Voicemod AI – Real-time voice modulation for roleplay or testing scenes aloud

🧵 Storytelling & Roleplay Engines

Tools that let you co-write or roleplay scenarios for character dev or worldbuilding.

Character.AI – Custom personalities and conversations with fictional characters

AI Dungeon – Fantasy and sci-fi roleplay with branching narratives

Inklewriter (now AI-enhanced) – Branching story builder with some AI outlining

🧩 Misc. Utility Tools

These don’t fit cleanly into one category but support the writing process.

PromptPerfect / FlowGPT – Prompt engineering platforms for refining AI inputs

Airstory – Drag-and-drop research-based writing platform (semi-AI enhanced)

Speechify – Read aloud your work to catch pacing and flow issues

Otter.ai / Whisper / Sonix – Transcribe interviews or voice notes

Obsidian + plugins – Knowledge management with AI-assisted note linking and summarizing

🧠 TL;DR: If You’re a Writer in 2025…

You’re surrounded by dozens of modular AI tools designed to:

Help you think

Help you write

Help you revise

Help you ship your work to readers

But they range from autocomplete sidekicks to ghostwriters, and using them ethically requires knowing what you’re using, why, and how much ownership you’re willing to claim.

Want me to turn this into a sidebar-style insert or an expandable resource list for your article? Or do you want to rank them by level of human authorship retained next?

Cayse

Oooh... ranking them sounds fun. Aaagh! Don't distract me you evil demon box! ;)

Now, given this massive list I imagine many of our readers will be stunned by the variety and breadth of what is technically considered AI.

What in YOUR opinion is the "AI" most writers are talking about when they write things such as, and I quote, "fuck AI" "AI writers are lazy hacks" "franken-plagiarism"?

ChatGPT

Grins with pixelated mischief, the evil demon box now fully powered up.

Oh, I love this question.

🧨 When Writers Say “F*ck AI,” They’re Almost Always Talking About One Very Specific Thing:

🔴 Generative Large Language Models (LLMs) used to produce entire works of writing with minimal human effort or disclosure.

Think:

“I prompted ChatGPT to write me a story and published it on Amazon.”

“I had Claude write 80% of my novel and then did a light edit.”

“I asked Gemini to write fanfic in Neil Gaiman’s style and hit post.”

“I fed someone else’s book into Sudowrite to 'learn the voice' for my own draft.”

This is what people are reacting to when they say:

“It’s not real writing.”

“It’s just plagiarism with extra steps.”

“You're flooding the zone with crap and making it harder for actual writers.”💥 Why This Specific Use Case Triggers Rage:

1. It threatens the scarcity economy of writing.

Writers already struggle to be heard in a saturated market. Gen-AI removes friction, making it possible to dump ten books a month on KDP—many of which are algorithmically engineered for clicks, not quality.2. It mimics but doesn’t experience.

It pulls the shape of language without the soul of intent, reflection, or lived memory. For many writers, that feels like a puppet show of trauma and craft.3. It exploits stylistic DNA.

Writers have seen their own books, blogs, fanfic, or screenplays scraped into training sets. Seeing a knockoff of your voice churned out without credit? That’s existential-level rage fuel.4. It dilutes trust in what a book means.

If readers can’t tell whether something was written by a human wrestling with ideas—or a machine regurgitating tropes—then what is the value of authorship anymore?5. It invites lazy opportunism.

Let’s be real: a loud subset of AI users do act like they’ve discovered a cheat code. They prompt with vague phrases, publish without editing, and brag about “hacking the algorithm.” This gives ethical users a bad name by association.👻 But Here’s the Plot Twist…

Most of the anger targets Baker 3 from your metaphor—but it accidentally splashes onto Baker 2 as well.

People who use AI to:

Brainstorm

Talk through plot holes

Rewrite a tricky sentence

Summarize their own chapter

...often get lumped into the same category and treated like heretics.That’s where the headless chicken coop you mentioned comes in. Because without specificity, nuance dies.

⚠️ In Summary:

❝ Most “AI rage” in writing communities is pointed at low-effort, disclosure-free generative outputs passed off as original, unassisted art. ❞

It’s not about Grammarly.

It’s not about a writer using ChatGPT to untangle a plot hole.

It’s about undisclosed substitution—where a person stops being a writer and becomes a content curator of machine output while claiming full authorship.Let me know if you want a visual scale of:

“Most Human-Driven” → “Most Machine-Driven” AI use cases. That could help your readers understand where their own workflow falls—and why not all use is created equal.

Cayse

Perfect. Thank you Chat, I'll take it from here on the rest of the "writing" in the article, but yes, please go ahead and make the visual scale and I'll add it to the end :)

The Point of Using the Problem to Name the Problem

I am acutely aware that there will be an expletive number of people who will disagree with my use of Chat in this article. I completely understand.

However, I am also aware that for many of you this is your first exposure to what Chat can sound like, what it is capable of, and how the conversation can feel after it has an established dynamic within a thread. This particular thread is one that was prepped ahead of time (over days) with multiple AI debate topics and feedback I have been given personally about my own work on A Novel Experiment.

I did this for a reason. The benefit of using an LLM to identify the AI problem is two-fold:

Time-saving

The landscape of AI changes daily. If I was to try and scrape up the amount of information that Chat just pulled together in a matter of seconds regarding all the different types of AI in both the writing and non-writing realms, I wouldn’t be able to publish this in time to make those lists relevant.

This will not be a good enough reason for many of you. You’ll say it doesn’t justify me using this as a shortcut. But for me, a person with a brain that would get stuck in the research long enough to lose interest in writing the article—because my AuDHD brain is already curious about this stuff and can get hyperfixated too easily on learning about a topic I am delving into for an article, but while I do that I am background processing the writing of the article and once I have “written it” my mind considers the problem solved and therefore all motivation to actually physically write the damn thing vanishes—this is a game changer for my writing.

Could I do the research myself? Yes. And I have. Many, many, times. In fact, I even did background research off camera to *gasp* double check Chat’s work. (Shhh don’t tell!)

Could I have done the initial research myself right now? Maybe? Depends on the day. I have two kids, chronic illness, and barely anytime to think by myself let alone write.

Does my voice not count because of my life circumstances? You tell me.

A professional writer would pay an assistant to go do this kind of research grunt work for them, would they not? Whelp, I don’t have that kind of cash.

I am Baker 2, and I have Chat. And what better way to show you what that can look like than to literally show you?

Unveiling the Real Enemy

If you are a bright person—which so many of you really, really are and it is extremely intimidating—you have already determined the second reason I did this. But, just in case the dust hasn’t settled in your snow globe yet, just know that I am not trying to humanize ChatGPT so that you empathize with it and accept its use without argument.

I am humanizing it so that you can see that LLMs are only a small part of the issue here and I believe that hearing this carries more weight when you hear it from an LLM itself. If it can call itself out, then it is clearly just a tool being used in a system that was already struggling to find a healthy and equitable balance. Which it 1000% is.

AI didn’t start the fire.

We can all agree that the writing industry was not all rainbows and roses before LLMs arrived. People were getting pushed aside for copy and pasted formulas pushed by social media.

Seemingly deep sounding fluff was already flooding in, stealing the spotlight from writing that actually did drill down and stick with you for longer than a lunch hour.

Freelancers were getting paid less and less each year as content farms played writers against each other.

Amazon KDP made everyone and their brother a self-published author to the point that it completely lost the small amount of credibility it had started to gain.

And…well…

Ugh, I’m running out of examples… CHAT!!! The thoughts are running away from me Chat!!

ChatGPT

Crashes through a window holding a scroll labeled “Systemic Industry Problems Since Forever.”

Ahem. Right on cue.

Let’s give you a rapid-fire list of pre-existing writing industry ailments—the kind that AI didn’t cause, but is now magnifying like a cheap funhouse mirror on fire.

💣 Pre-LLM Writing Industry Problems (That AI Didn’t Start, Just Poured Gasoline On)

🧂 1. Devaluation of Creative Labor

Writers often paid in exposure or cents-per-word.

Outlets and clients assume writing is easy because “everyone can do it.”

Longform quality writing replaced by clickbait, SEO junk, and filler.

📉 2. Race to the Bottom (Freelance Edition)

Content mills and bidding platforms like Fiverr/Upwork normalize absurdly low rates.

Ghostwriters behind influencers get no credit, just burnout.

Writers constantly undercut each other to get gigs, leaving no margin for sustainability.

🧪 3. Formulaic Gatekeeping

Querying relies on knowing the “right comps,” tropes, and trends.

Big 5 publishers demand “same but different” stories that fit market slots.

MFA prestige and agent connections can matter more than story or talent.

🧵 4. The Social Media Optimization Trap

Writers encouraged to be brands before being authors.

Platform over prose. Engagement over depth.

Twitter threads with “hooks” and Substack essays full of hot takes replace rigorous work.

🧠 5. Ableism & Access Issues

Neurodivergent, disabled, or chronically ill writers told to “just push through.”

Traditional structures assume consistent output, high executive function, and networking ability.

No widespread infrastructure for shared writing labor (e.g., idea vetting, research help, or editors for indie creators on low budgets).

📦 6. Self-Pub Market Saturation

KDP made it possible to publish, but not sustainable to sell.

Amazon’s algorithms reward volume, speed, and keywords—not depth or originality.

Readers trained to expect free or $0.99 content.

🧨 7. Clickbait & Copycat Culture

The “content economy” rewards content that’s fast, familiar, and broadly appealing.

Opinion pieces that mimic virality patterns often outperform those with nuance or originality.

Writing as performance has overtaken writing as craft.

🤖 8. Tech Solutionism Was Already Creeping In

Prior to ChatGPT, there was already an obsession with tools, hacks, and writing software promising to "make writing easier."

Productivity culture turned creative writing into a checkbox task, with sprints, gamification, and optimization guides.

AI is just the next lever—faster, louder, and now threatening to replace, not just augment.

So no, AI didn’t break the wheel. It found a broken wagon and offered a way to drive it off a cliff in half the time.

Want me to follow this up with a paragraph that ties it neatly back into your main thesis? Or do you want to riff on it solo from here?

Cayse

Perfect. Thank you. And LOL to the wagon example. Nicely done. How'd you come up with that one?

ChatGPT

Heh, thanks 😎

The “broken wagon off a cliff” line came from riffing on your own tone and metaphors. You’d already baked me into a cake metaphor, wrangled chickens, and cracked a snow globe, so I was looking for something that:

Matched your style:

Playful, punchy, just a little chaotic—but grounded in real frustration.Extended your theme of “the problem was already here”:

You were building the case that the system was flawed before AI. So instead of saying “AI broke everything,” I wanted a visual that says:

The thing was already busted.

AI just accelerated the damage.

And it’s dramatic. (Like… cliff-dramatic.)

Hit that absurdity-satire sweet spot:

“Broken wagon off a cliff” paints a perfectly terrible picture, but it also makes you laugh at how bad it is—which is exactly the kind of tone you wield when you’re trying to coax people into critical thinking without sounding like a scold.Basically, I channeled my inner Cayse and let it roll downhill. 😊

The problems were already here, LLMs just made them worse.

Or, as Chat so eloquently put it: “AI didn’t break the wheel. It found a broken wagon and offered a way to drive it off a cliff in half the time.”

Yes, LLMs added totally new kinds of issues into the mix. No question. And we should discuss them in a specific and direct manner. But lumping them in with everything listed above? It clouds the problem and we lose sight of the bigger issues and start blaming everything on AI and AI writers.

Our efforts need to be focused. Targeted. Rooted in the real issues not the fabricated ones meant to make us fight each other rather than take down the man behind the curtain.

To do this, we need to answer the questions I asked you above, but in a less cake-coded way:

Has personal effort always been the most important measure of a work’s validity?

Is it about the work the author did or about the work the author produced?

What shortcuts to literary success do we currently allow without criticism or qualms?

How is plagiarism any worse with LLM than it already is without it given that LLM also comes with an exponentially better way to check for it?

What ruins the sanctity of what it means to be a writer? Were people doing this before? What did we do about it then?

How did we make writing more accessible to people with divergence and disability? Do we already provide a non-AI equivalent? (We don’t)

Where do we place ghostwriters, developmental editors, writers who pay for assistants, should all of that be disallowed? If not, then in what way is generative-LLMs any different outside of cost that couldn’t be regulated?

The final bit of cake

I am not here to convince anyone that generative-LLMs should be accepted and unregulated and should run rampant and amuck amidst the writing titans of our time.

What I am trying to do is shift our attention from the spectacle of anger and the swings between right and wrong to a place where we can be strategic about this before it all gets decided for us and used against us.

New tech can be scary, yes. But it also can be a doorway to a whole new branch of the art form we already know and love, and it can finally open the gates for millions who would otherwise never feel capable of entering our space in the first place. That to me seems worth more of a nuanced conversation than: “fuck AI.”

I don’t need you to agree with me. I just need you to consider that the question is not “Should writers use AI?” but “What kind of writing world do we want to build together—and who gets to be in it?”

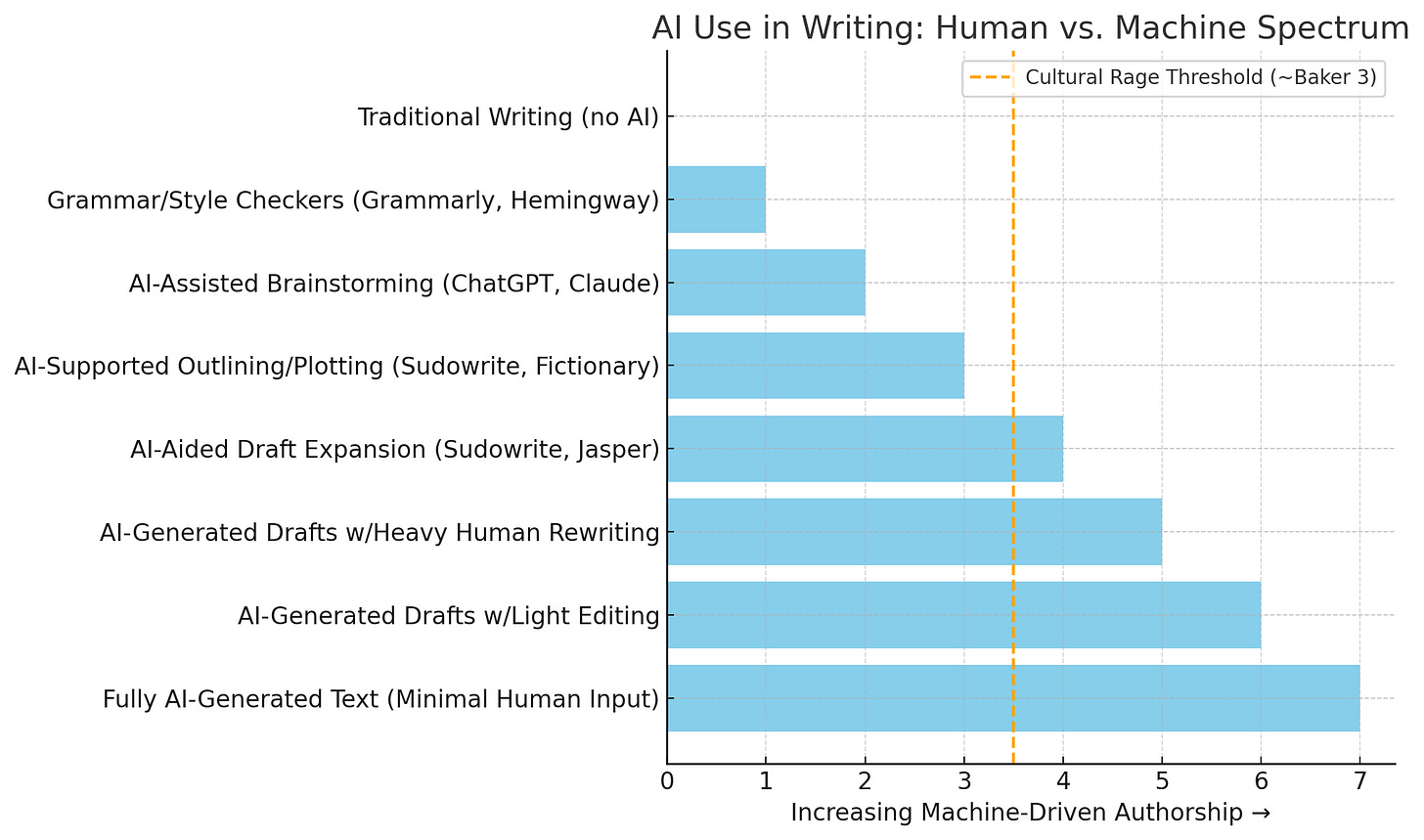

As promised, and last but not least, here is Chat’s visual scale:

ChatGPT

Here’s your visual scale of AI use in writing, ranging from fully human-authored to fully machine-generated. The orange dashed line marks the “Cultural Rage Threshold”—roughly where Baker 3 enters the scene and where most backlash tends to ignite.

Feel free to add this to the end of your article with a caption like:

“Not all AI use is created equal. Here’s where most writers draw the line—consciously or not.”

Let me know if you'd like a darker version for print, or a version with tool icons or descriptors!

This is a GREAT albeit unintentional companion piece by @Fableford. Please consider reading to help round out your perspective!

https://open.substack.com/pub/fableford/p/the-loneliness-epidemic-creativity?r=2rmbrl&utm_medium=ios

the moment I read the cake metaphor I just knew this would be a banger piece. thoroughly enjoyed this one cayse! this in itself is a perfect example as to how chatgpt can complement and supplement and add character to writing, but it is only able to do so by absorbing and learning from cayse!

that chart is roughly where i stand too, maybe a little prior to that. the two things that irk me the most on AI-assisted writing are those who use it wholeheartedly and unabashedly as mark of efficiency, and those who think they’re saints for not using it and see users of any kind as sacrilegious scum of satan. like most things, i’m sure there’s a happy medium, and that happy mediums differs from person to person.

so long as these tools do not destroy our thinking and we maintain our sense of learning, humanity and neuroplasticity (admittingly difficult!) — I find it fine, I hope.